Soft Tokens, Hard Truths Summary

- Soft Tokens, Hard Truths

- 2025-09; Butt, Kwiatkowski, Labiad, Kempe, Ollivier

High-Level Summary

- Introduces a scalable method to learn continuous CoTs via RL

- Uses 'soft' tokens: mixtures of tokens together with noise on the input embeddings

- Matches discrete-token CoT for pass@1 metrics and surpasses for pass@32, suggesting greater CoT diversity

- Interestingly, the best-performing scenarios involve training with continuous CoT tokens but using discrete-tokens for inference

- Better out-of-domain evaluations, suggesting a 'softer touch'

- Tons of benchmark comparisons, leading to an excellent paper; shame the code isn't provided...

Elevator Pitch

Standard CoT is constrained by the discreteness of language tokens and the sequential nature of the sampling. Contrastingly, human cognition often operates over abstract and fluid concepts, rather than linguistic symbols. This motivates enabling LLMs to reason in continuous concept spaces.

Instead of sampling a token according to a (softmax) distribution, Soft Thinking simply takes the weighted average of all tokens. Soft Tokens, Hard Truths takes this a step further by adding noise—an essential component for (standard) RL training. This adds minimal computational overhead unlike, for example, COCONUT.

Contributions and Findings:

- a scalable continuous-token post-training algorithm;

- parity for pass@1 and improvements for pass@32;

- improved robustness and out-of-distribution performance;

- "hard inference on soft models".

Methodology

In a nutshell, average wrt the full distribution after the softmax step, instead of sampling, then add noise.

Notation for LLM Architecture

- A token is a one-hot (row) vector .

- The token embedding matrix takes a sequence (stack) of tokens and returns a sequence of embeddings.

- The transformer stack turns the sequence of input embeddings into a sequence of output embeddings.

- The output encodings are turned into logits by a decoding matrix . The logits are turned into next-token probabilities are obtained by a softmax: with .

Standard (Hard) Tokens

In standard, hard-token models, the next token is sampled according to the last component of : where is the one-hot encoding of token . This is applied inductively to get the sequences of next tokens.

Soft Thinking

In Soft Thinking, during the CoT phase, instead of sampling the next token according to , the probabilities are used to define a mixture of embeddings, which is used as the next input layer: where , and and .

Once the CoT phase is complete, the model samples normal (hard) tokens.

This model is not amenable to direct RL training via REINFORCE-like algorithms, since there is no underlying randomness. In principle, one could backprop through all timesteps of the CoT, but this leads to technical memory challenges not discussed in the paper.

Mixture of Inputs mixes a hard (sampled) token and the soft mixture, introducing randomness. The choice of mixture seemed pretty application-specific. The authors of that paper did not try RL training.

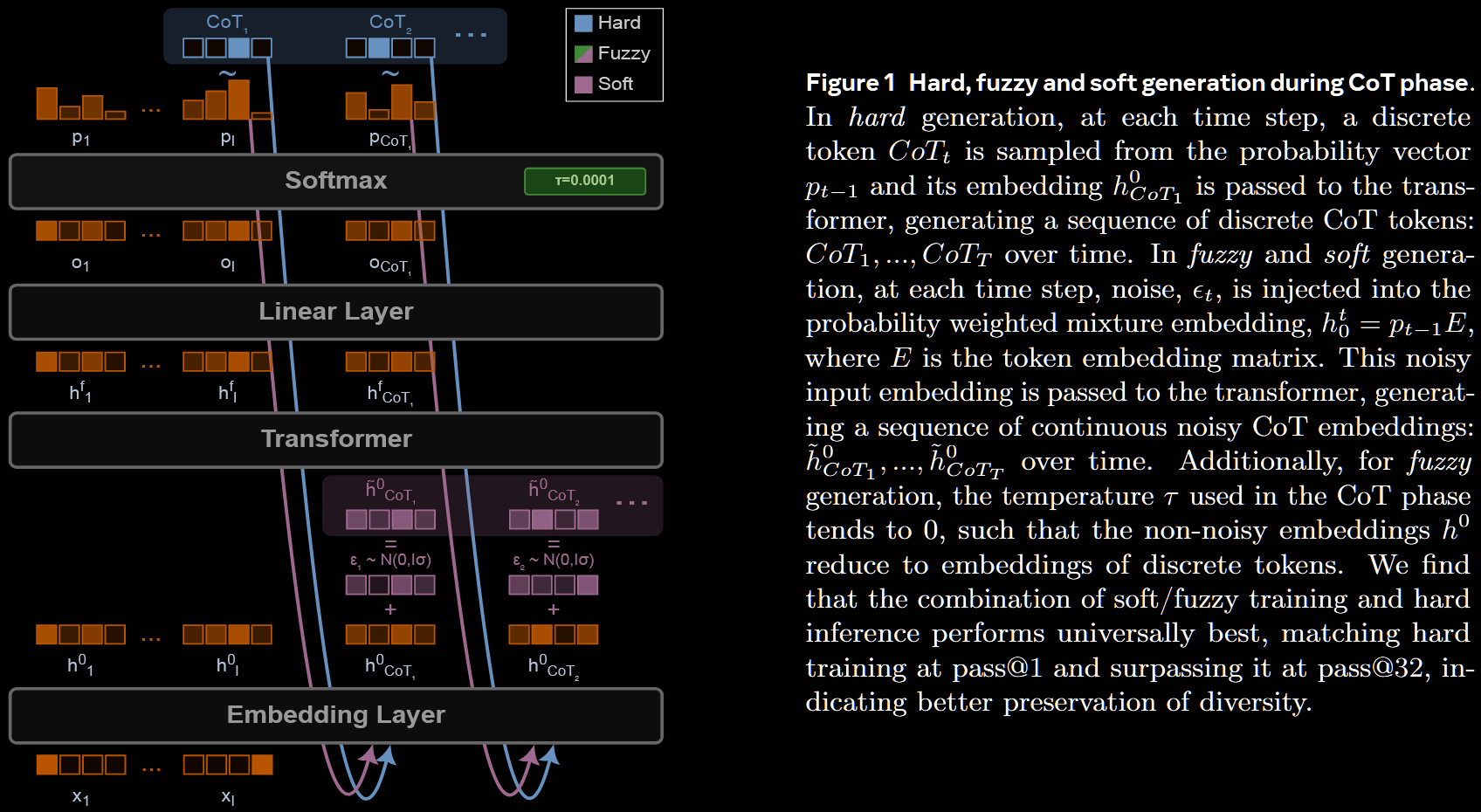

Noisy Soft Thinking: Soft Tokens and Fuzzy Tokens

The current paper proposes simply adding noise to the soft mixture, to make soft thinking RL trainable: at the next timestep, the transformer stack is fed .

They call this model soft tokens. When the CoT temperature , they use the term fuzzy tokens, since then the non-noisy embeddings reduce to (greedily chosen) discrete tokens.

RL on Soft Tokens

The randomness means that the usual RL algorithms, such as RLOO, GRPO, DAPO and so on, can now be applied in the usual way. Some details are given at the end of §3.

Experiments

There are many possible variants: soft tokens during training, hard sampling during inferences, trained on GSM8K and evaluated on MATH-500; etc. Laudably, many variations are run and compared—each taking up to four days!

Configurations

Each configuration was run with 3 independent random seeds; the tablets report the resulting mean and standard deviation.

-

Training settings

- Hard tokens: categorical sampling of ordinary hard CoT tokens with temp

- Soft tokens: full mixture at temp , plus Gaussian noise

- Fuzzy tokens: like soft tokens, but at temp (almost greedy + noise)

- Noise scale: where is the RMS of the norms of the token embeddings

- Train for 4k steps for each dataset (which is a lot!)

-

Inference settings

- Hard Greedy: discrete tokens, CoT temp (greedy)

- Hard Sample: discrete tokens, CoT temp

- Soft Greedy: no noise (scale ), CoT temp

- Soft Sample: noise scale , CoT temp

- Fuzzy Greedy: no noise (scale ), CoT temp (almost hard greedy)

- Fuzzy Sample: noise scale , CoT temp =

-

Base models

- Llama 3.2 3B Instruct

- Llama 3.1 8B Instruct

- Qwen 2.5 3B Instruct

-

Datasets

- Training (maths):

- GSM8k

- MATH

- DeepScaleR

- Evaluation (maths):

- GSM8K

- MATH (specifically, subset MATH-500)

- OlympiadBench (specifically, 675 questions with final answers and not containing images or figures)

- Evaluation (out-of-distribution):

- HellaSwag

- MMLU

- ARC/AI2

- Training (maths):

Results

So many comparisons are undertaken that it would be too much to report them here—and that's highly commendable. Only some of those in the body are repeated here, but the reader is encouraged to view the full paper.

Across datasets and models, the three training schemes are broadly comparable for greedy pass@1. This demonstrates that fuzzy and soft thinking are at least effective. Further, they have a clear overall advantage for pass@32 over hard training.

For all training settings, hard inference generally performs the best, both for pass@1 and pass@32. In particular, previous reported benefits of soft inference on hard (normal) training is not recovered.

.png)

The authors point out one particular set-up:

Llama-8B-Instruct, trained on GSM8K and evaluated on MATH-500, only achieves good scores when soft/fuzzy CoT training is used; classical hard-token CoT is ineffective.

Base Llama-8B-Instruct (no fine-tuning) has good performance on GSM8K (presumably due to exposure in training), but this does not translate to good performances on MATH. Hard fine-tuning makes things worse (on MATH), but soft/fuzzy improve. This suggests that soft/fuzzy training bring more generalisation on Llama-8B-Instruct.

There is typically a gap between hard greedy () and hard sample () inference settings for the base models and models trained with soft/fuzzy CoT, whereas the gap with hard CoT training is small. This is highlighted by Figure 3.

.png)

Potential Red Flag

Figure 3 does raise a red flag: by the time k reaches 32, the base model (no fine-tuning) is basically indistinguishable on pass@k from the models fine-tuned with soft/fuzzy CoT (hard CoT is worse).

This gives evidences towards the idea that RL fine-tuning focuses the distribution to improve sampling (pass@k for small k) but not capability (large k). Certainly, at least for some of the plots, it looks like the orange line is steepers, even at k = 32.

Certainly, soft/fuzzy CoT training appear to have less of a negative impact for large k. However, as much as the authors hint otherwise, on sample pass@1, hard CoT systematically beats soft/fuzzy. That said, they are close for greedy pass@1. This suggests that soft/fuzzy CoT is perhaps somewhere between the two (ie, the base and hard CoT)?